The Uncomfortable Truth

Between 70% and 88% of enterprise AI pilots never reach production. They don't fail spectacularly — they simply fade away, consuming budgets and eroding confidence in AI's transformative potential.

Picture this: A boardroom, six months ago. The CEO has just seen a compelling demo. The CTO is nodding enthusiastically. The CFO is cautiously optimistic. "Let's run a pilot," someone says. Everyone agrees. The AI roadmap gets a bullet point, a budget line, and a champion.

Fast forward to today. That pilot? It's sitting in a Jupyter notebook on a data scientist's laptop. The champion has moved on to other priorities. The vendor is sending follow-up emails no one replies to. The boardroom doesn't talk about it anymore.

This isn't a hypothetical. It's the most common outcome for enterprise AI initiatives. And if you're honest, you've probably seen it happen in your own organization.

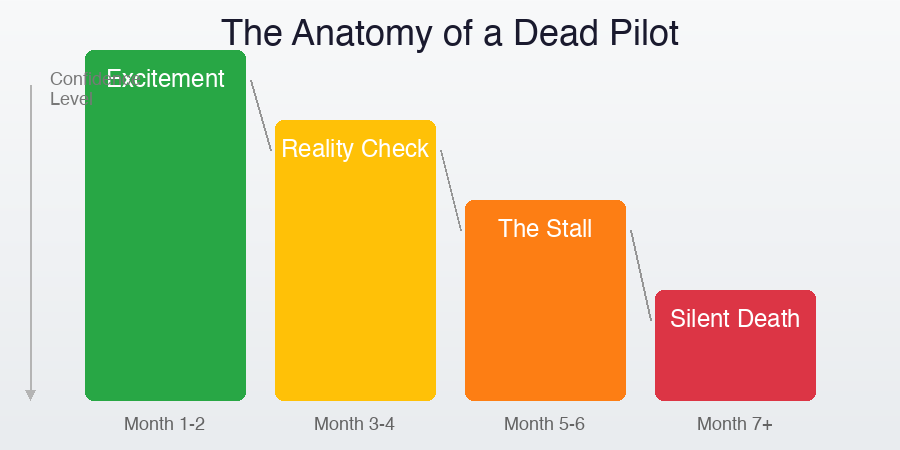

The Anatomy of a Dead Pilot

AI pilots don't die in one dramatic moment. They decompose through a predictable sequence that plays out across thousands of enterprises worldwide.

Vendor demos dazzle. Internal presentations get standing ovations.

The AI vendor shows what's possible using pristine demo data. Stakeholders see the future. Budget gets allocated. A cross-functional team is assembled. Everyone is optimistic. The pilot scope is ambitious because "we might as well test the full capability."

Real data meets the model. Results are... different.

The team discovers their actual data looks nothing like the demo data. Fields are inconsistent. Formats vary wildly. Legacy systems export in unexpected ways. The model's accuracy drops from 95% in demos to 60% on real data. IT raises security concerns. Legal questions data residency. The cross-functional team starts missing meetings.

Integration becomes a multi-headed beast.

Even when the model works, connecting it to existing workflows proves harder than anyone anticipated. APIs don't exist or are poorly documented. The ERP system requires custom connectors. Users who were supposed to adopt the tool are still using their old spreadsheets because "the new system doesn't handle edge cases." The champion's quarterly review focuses on other metrics.

No formal announcement. No post-mortem. Just... silence.

The pilot doesn't get officially killed — that would require someone to admit it failed. Instead, the license renewal gets "deprioritized." The data scientist gets reassigned. The vendor contract expires. A year later, someone proposes a new AI initiative and hears: "We tried that. It didn't work."

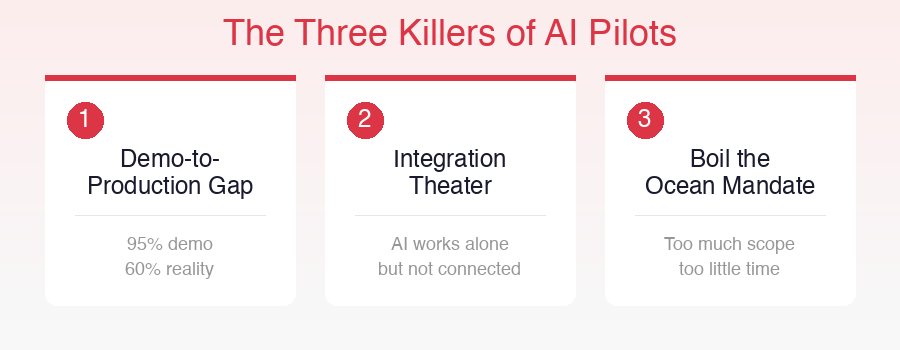

The Three Killers

After observing dozens of enterprise AI implementations — the ones that survived and the far greater number that didn't — three root causes emerge consistently.

Killer #1: The Demo-to-Production Gap

Enterprise vendors have perfected the art of the demo. Clean data, controlled conditions, impressive metrics. But production environments are hostile territory for AI:

- Data quality varies — Real invoices have coffee stains, handwritten notes, and fields in languages nobody mentioned

- Edge cases multiply — The demo handled 10 document types; production sees 200 variations of the same document type

- Volume changes everything — Processing 50 test documents is different from processing 50,000 production documents with SLAs

The gap is structural. Demo environments are designed to sell. Production environments are designed to survive. The skills and infrastructure needed for each are fundamentally different.

Killer #2: Integration Theater

Many pilots demonstrate standalone capability beautifully but never actually connect to the systems that matter. The AI can extract invoice data with 95% accuracy — but if that data still needs to be manually verified and re-keyed into SAP, you haven't automated anything. You've just added a step.

Real integration means:

- Data flows automatically from source to destination

- Exceptions are handled programmatically, not manually

- The existing workflow actually changes (not just gets an AI appendage)

- Users interact with familiar tools, not a separate AI interface

Most pilots never reach this stage because integration is treated as a Phase 2 problem. But without integration, there is no value delivery — and without value delivery, there is no Phase 2.

Killer #3: The Boil-the-Ocean Mandate

Ambition is the most well-intentioned killer. Executives want transformative results. Vendors want large deals. Together they design pilots that try to solve everything at once:

- "Let's process ALL document types across ALL departments"

- "The pilot should demonstrate end-to-end automation"

- "We need to show ROI across the entire organization"

The result? A scope so large that no reasonable timeline can deliver it, no reasonable budget can fund it, and no reasonable team can staff it. The pilot becomes a mini digital transformation program — and we know how those usually end.

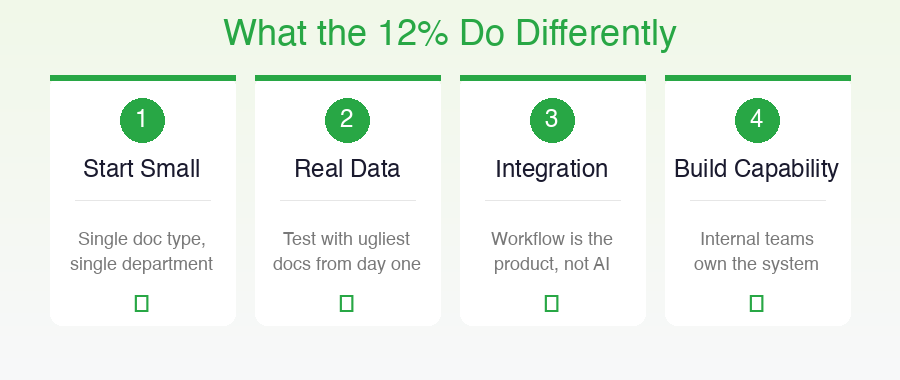

What the Survivors Do Differently

The 12% that make it to production don't just get lucky. They follow patterns that are surprisingly consistent — and surprisingly counter-intuitive.

1. They Start Embarrassingly Small

Successful implementations typically begin with a single document type in a single department. Not because the technology can't handle more, but because the organization can't handle more.

A Fortune 500 company we observed started their document intelligence pilot with one thing: vendor invoices in accounts payable. Not all invoices. Not all vendors. Just the top 20 vendors who represented 60% of invoice volume. They achieved 94% straight-through processing within 8 weeks.

Only after proving value in that narrow scope did they expand — first to more vendors, then to purchase orders, then to other departments. Each expansion was justified by demonstrated results, not projected ones.

2. They Demand Real Data from Day One

Survivors refuse to evaluate AI systems on demo data. They insist on testing with their ugliest, messiest, most problematic real documents before committing to a pilot.

The Litmus Test: If the vendor's system can't handle your worst documents during evaluation, it definitely won't handle them in production. Any vendor confident in their technology will welcome this challenge.

3. They Treat Integration as the Product

For survivors, the AI model is not the product. The integrated workflow is the product. They design pilots around the complete data flow, not around the AI capability in isolation.

This means integration architecture is defined before the pilot begins, not after. API requirements are documented. Data mapping is completed. Exception handling workflows are designed. The pilot success criteria isn't "the model is accurate" — it's "the process works end-to-end without manual intervention."

4. They Build Capability, Not Dependency

Surviving implementations invest in internal capability from day one. They don't outsource all AI knowledge to the vendor. They ensure that:

- Internal teams understand how the system makes decisions

- Configuration and tuning can be done without vendor involvement

- The organization can expand scope independently

- Knowledge doesn't walk out the door when the vendor's implementation team leaves

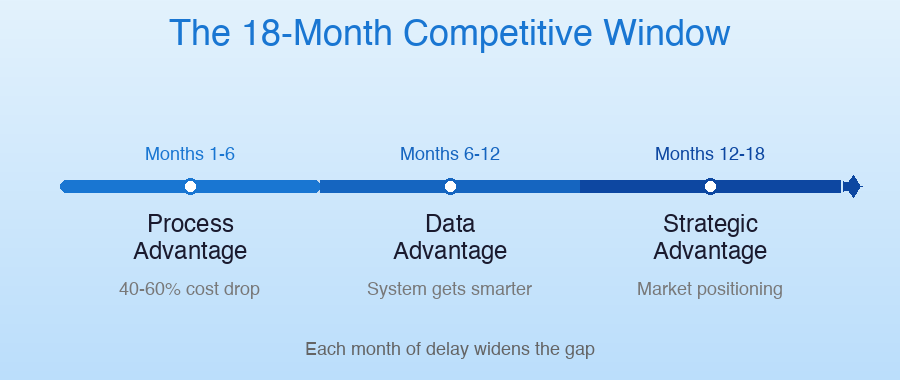

The Competitive Window

Here's what makes this urgent: the organizations that successfully deploy AI now are building compounding advantages that will be difficult — perhaps impossible — for laggards to overcome.

The 18-Month Advantage Timeline

Months 1–6

Process Advantage

Early adopters automate core workflows while competitors still process manually. Cost per transaction drops 40-60%.

Months 6–12

Data Advantage

Processed data creates institutional intelligence. Patterns emerge. The system gets smarter with every document, making the gap wider.

Months 12–18

Strategic Advantage

Real-time document intelligence enables new service offerings, faster decision-making, and market positioning that manual operators cannot match.

Each month of delay isn't just a month of missed efficiency. It's a month where competitors who got it right are pulling further ahead, their systems learning and improving while yours sits in a Jupyter notebook.

The DoDocs Perspective

We built DoDocs specifically to close the gap between AI demo and AI production. Our approach directly addresses each of the three killers:

- Against the Demo-to-Production Gap: Our platform is tested on real-world documents from day one — messy, inconsistent, multilingual. We don't hide behind clean demo data because our customers don't live in clean-data environments.

- Against Integration Theater: DoDocs provides production-ready APIs, pre-built connectors, and workflow automation that delivers end-to-end value, not standalone AI tricks. The extracted data flows directly where it needs to go.

- Against Boil-the-Ocean: Our deployment model is designed for rapid, focused starts. Customers go live with one document type in weeks, then expand based on proven results. No multi-year transformation programs required.

Don't Let Your AI Pilot Become Another Headstone

See how DoDocs takes document intelligence from pilot to production in weeks, not months.

Start with your hardest documents. Test with your real data. Deploy into your actual workflows.

About the Author

Dan Gudkov is CEO and Founder at DoDocs.ai, with extensive experience in enterprise AI deployment and document intelligence. Connect with Dan on LinkedIn.

This article was originally published on Dan Gudkov's Substack and has been adapted for the DoDocs audience with permission.